What is this? • Get Started • Community • Contribute

SimpChain is the simplest LLM development toolkit

SimpChain is a pared-down large language model (LLM) development toolkit. It's designed for developers who prefer simplicity and a hands-on approach to learning and working. This toolkit cuts out unnecessary complexity, aiming to provide you with only the essentials you need to build your own language models.

Autodoc is a experimental toolkit for for auto-generating codebase documention for git repositories using Large Language Models, like GPT-4 or Alpaca. Autodoc can be installed in your repo in about 5 minutes. It indexes your codebase through a depth-first traversal of all repository contents and calls an LLM to write documentation for each file and folder. These documents can be combined to describe the different components of your system and how they work together.

The generated documentation lives in your codebase, and travels where your code travels. Developers who download your code can use the doc command to ask questions about your codebase and get highly specific answers with reference links back to code files.

In the near future, documentation will be re-indexed as part your CI pipeline, so it is always up-to-date. If your interested in working contributing to this work, see this issue.

Autodoc is in the early stages of development. It is functional, but not ready for production use. Things may break, or not work as expected. If you're interested in working on the core Autodoc framwork, please see contributing. We would love to have your help!

Question: I'm not getting good responses. How can I improve response quality?

Answer: Autodoc is in the early stages of development. As such, the response quality can vary widely based on the type of project your indexing and how questions are phrased. A few tips to writing good query:

- Be specific with your questions. Ask things like "What are the different components of authorization in this system?" rather than "explain auth". This will help Autodoc select the right context to get the best answer for your question.

- Use GPT-4. GPT-4 is substantially better at understanding code compared to GPT-3.5 and this understanding carries over into writing good documentation as well. If you don't have access, sign up here.

Below are a few examples of how Autodoc can be used.

- Autodoc - This repository contains documentation for itself, generated by Autodoc. It lives in the

.autodocfolder. Follow the instructions here to learn how to query it. - TolyGPT.com - TolyGPT is an Autodoc chatbot trained on the Solana validator codebase and deployed to the web for easy access. In the near future, Autodoc will support a web version in additon to the existing CLI tool.

Autodoc requires Node v18.0.0 or greater. v19.0.0 or greater is recommended. Make sure you're running the proper version:

$ node -vExample output:

v19.8.1Install the Autodoc CLI tool as a global NPM module:

$ npm install -g @context-labs/autodocThis command installs the Autodoc CLI tool that will allow you to create and query Autodoc indexes.

Run doc to see the available commands.

We'll use the Autodoc repository as an example to demonstrate how querying in Autodoc works.

Clone Autodoc and change directory to get started:

$ git clone https://github.com/context-labs/autodoc.git

$ cd autodocRight now Autodoc only supports OpenAI. Make sure you have have your OpenAI API key exported in your current session:

$ export OPENAI_API_KEY=<YOUR_KEY_HERE>To start the Autodoc query CLI, run:

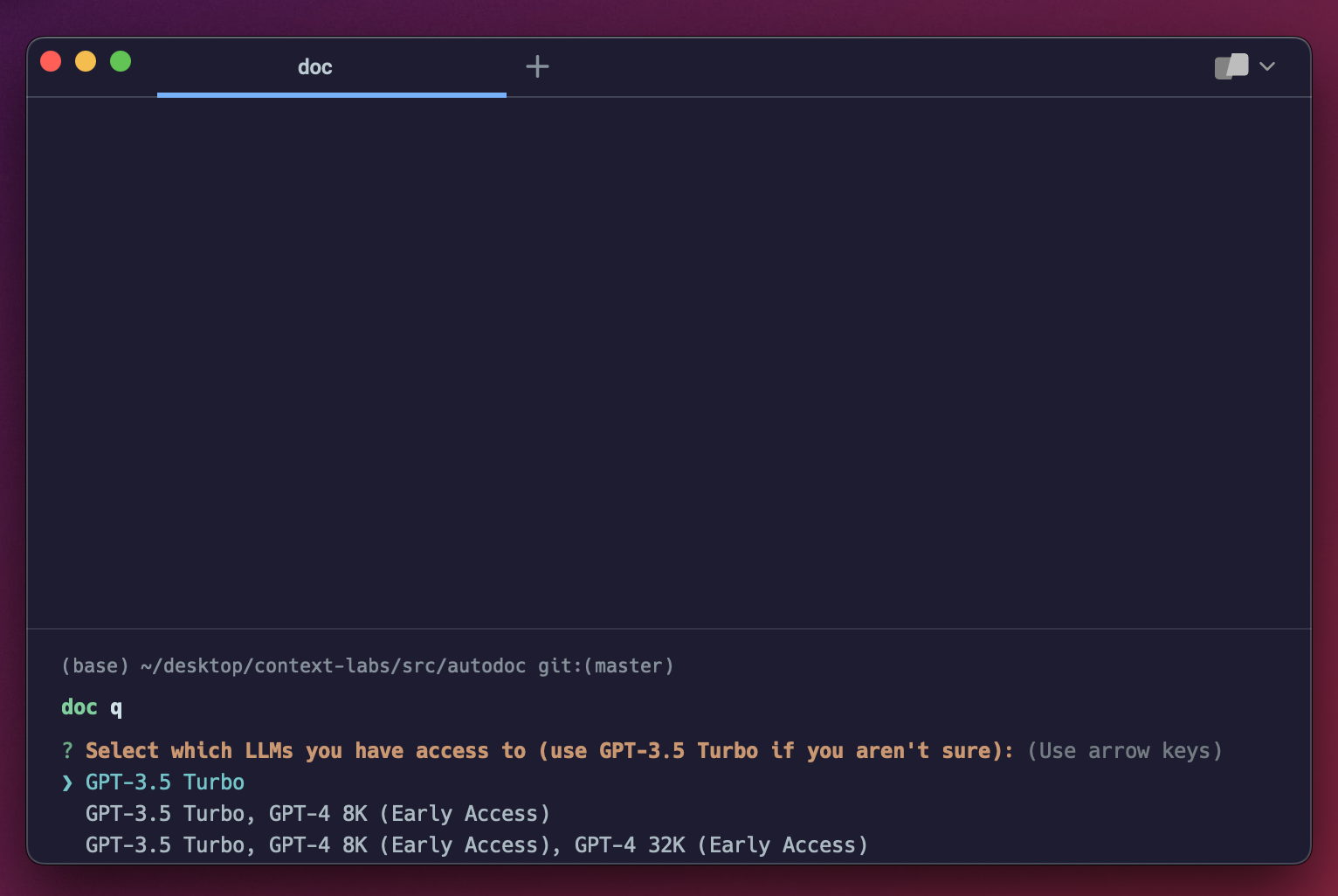

$ doc qIf this is your first time running doc q, you'll get a screen that prompts you to select which GPT models you have access to. Select whichever is appropriate for your level of access. If you aren't sure, select the first option:

You're now ready to query documentation for the Autodoc repository:

This is the core querying experience. It's very basic right now, with plenty of room of improvement. If you're interested in improving the Autodoc CLI querying experience, checkout this issue.

Follow the steps below to generate documentation for your own repository using Autodoc.

Change directory into the root of your project:

cd $PROJECT_ROOTMake sure your OpenAI API key is available in the current session:

$ export OPENAI_API_KEY=<YOUR_KEY_HERE>Run the init command:

doc init

You will be prompted to enter the name of your project, GitHub url, and select which GPT models you have access to. If you aren't sure which models you have access to, select the first option. This command will generate an autodoc.config.json file in the root of your project to store the values. This file should be checked in to git.

Note: Do not skip entering these values or indexing may not work.

Prompt Configuration: You'll find prompt directions specified in prompts.ts, with some snippets customizable in the autodoc.config.json. The current prompts are developer focused and assume your repo is code focused. We will have more reference templates in the future.

Run the index command:

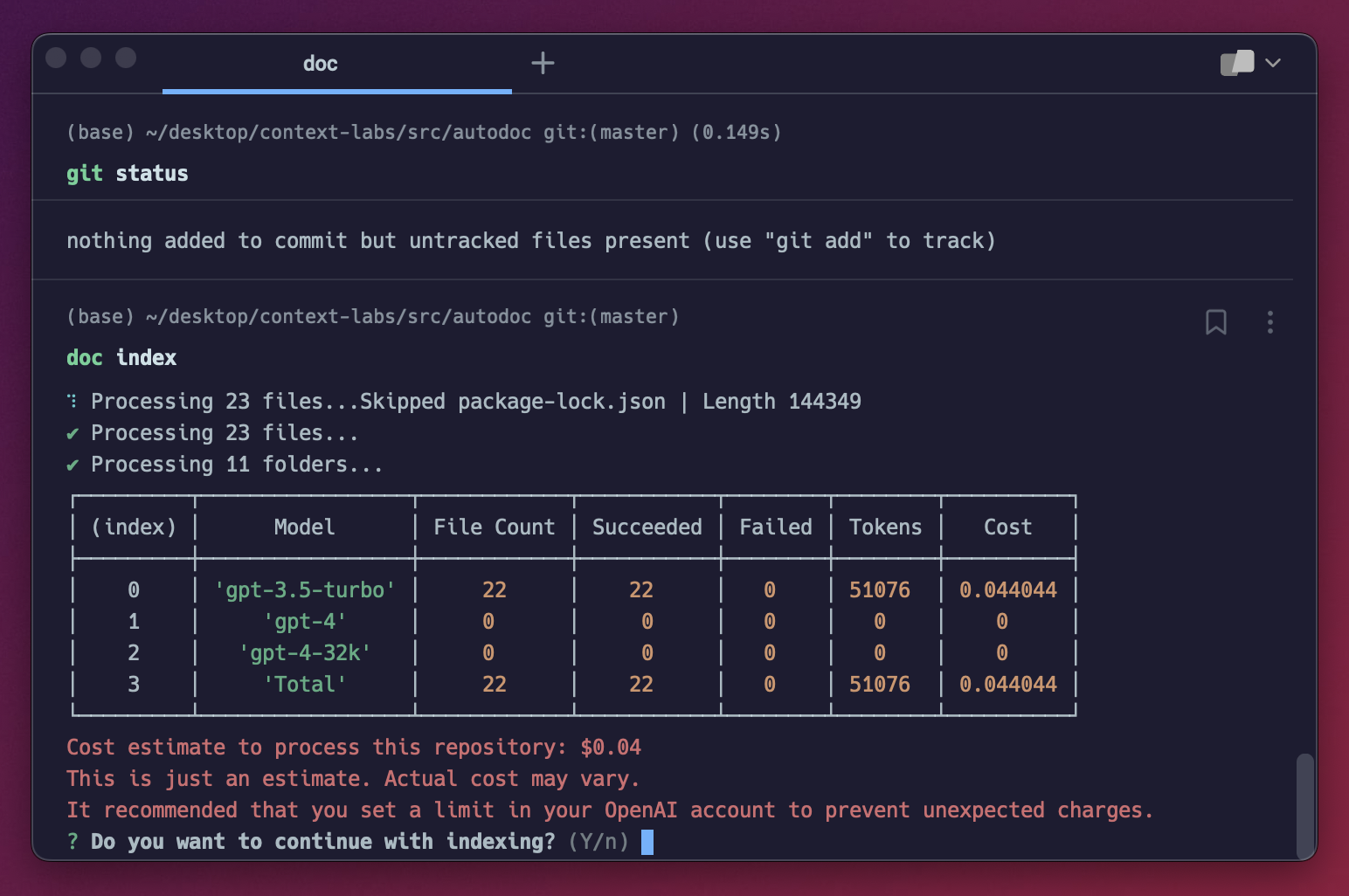

doc indexYou should see a screen like this:

This screen estimates the cost of indexing your repository. You can also access this screen via the doc estimate command. If you've already indexed once, then doc index will only reindex files that have been changed on the second go.

For every file in your project, Autodoc calculates the number of tokens in the file based on the file content. The more lines of code, the larger the number of tokens. Using this number, it determine which model it will use on per file basis, always choosing the cheapest model whose context length supports the number of tokens in the file. If you're interested in helping make model selection configurable in Autodoc, check out this issue.

Note: This naive model selection strategy means that files under ~4000 tokens will be documented using GPT-3.5, which will result in less accurate documenation. We recommend using GPT-4 8K at a minimum. Indexing with GPT-4 results in signficantly better output. You can apply for access here.

For large projects, the cost can be several hundred dollars. View OpenAI pricing here.

In the near future, we will support self-hosted models, such as Llama and Alpaca. Read this issue if you're interesting in contributing to this work.

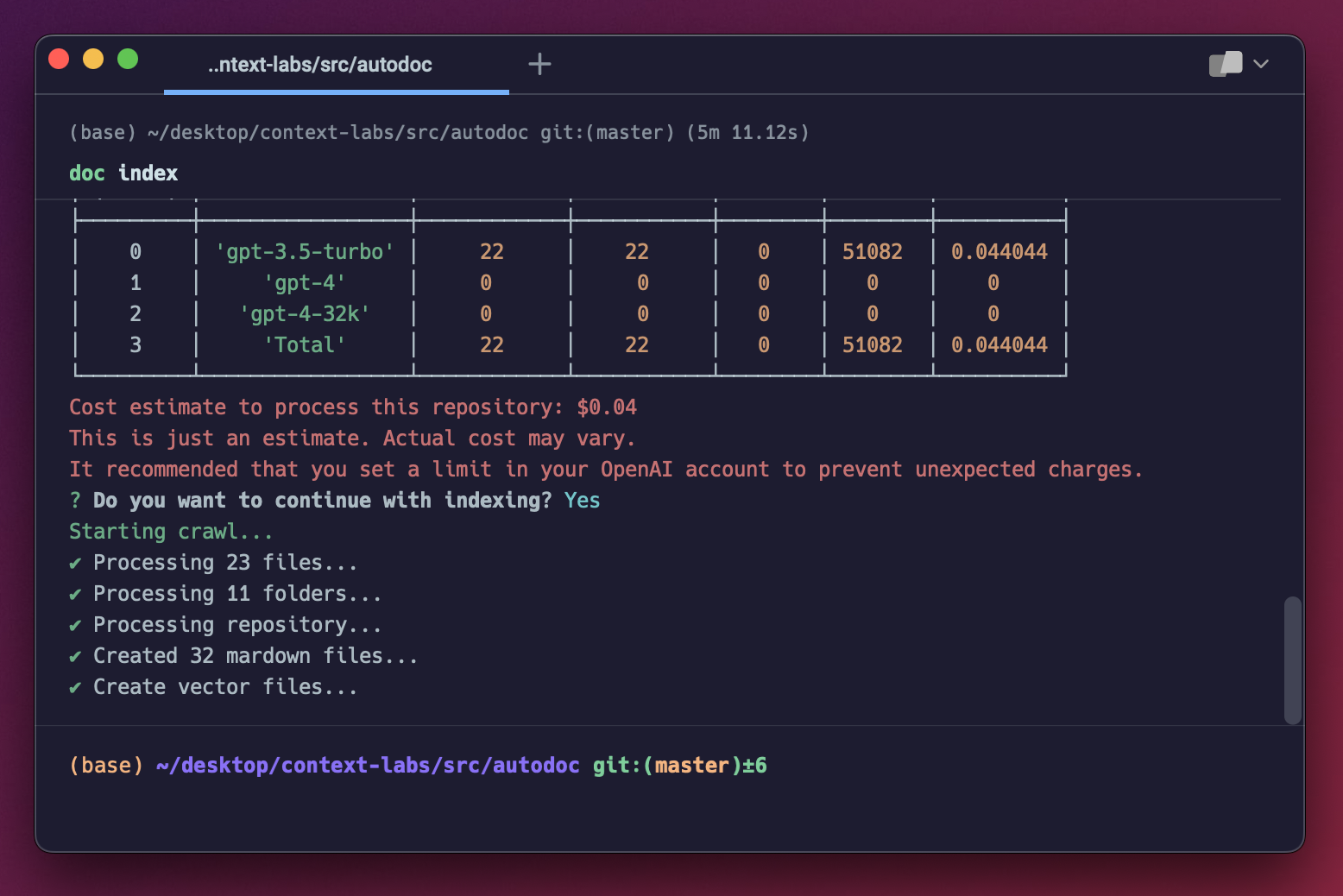

When you're done repository is done being indexed, you should see a screen like this:

You can now query your application using the steps outlined in querying.

There is a small group of us that are working full time on Autodoc. Join us on Discord, or follow us on Twitter for updates. We'll be posting reguarly and continuing to improve the Autodoc applicatioin. What to contribute? Read below.

As an open source project in a rapidly developing field, we are extremely open to contributions, whether it be in the form of a new feature, improved infra, or better documentation.

For detailed information on how to contribute, see here.