EasyMocap is an open-source toolbox for markerless human motion capture from RGB videos. In this project, we provide a lot of motion capture demos in different settings.

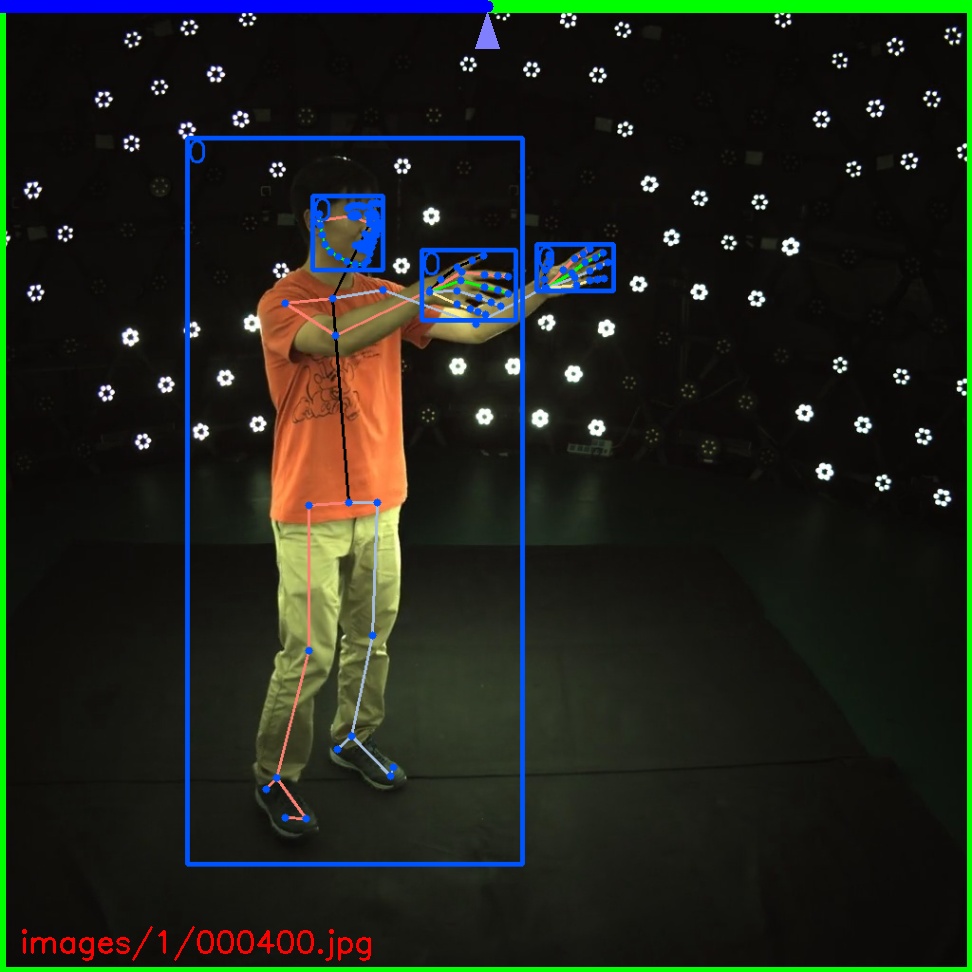

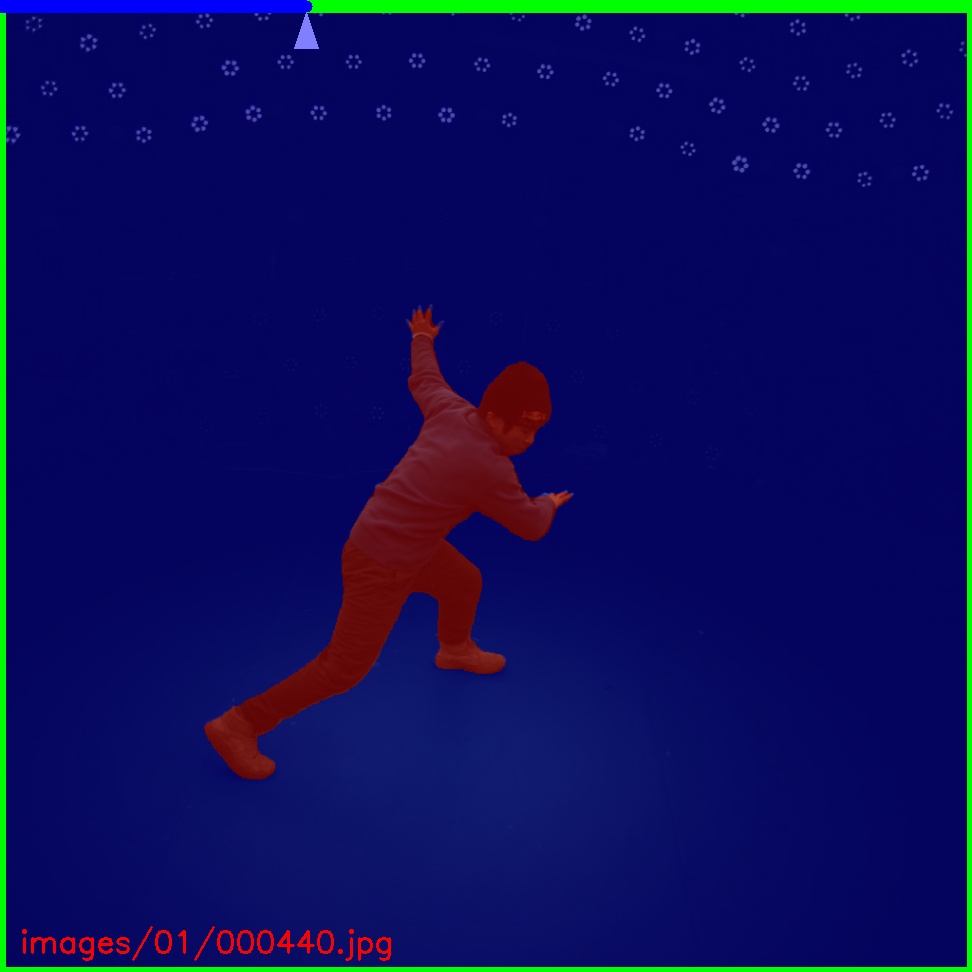

This is the basic code for fitting SMPL[1]/SMPL+H[2]/SMPL-X[3]/MANO[2] model to capture body+hand+face poses from multiple views.

With our proposed method, we release two large dataset of human motion: LightStage and Mirrored-Human. See the website for more details.

- 08/09/2021: Add a colab demo here.

- 06/28/2021: The Multi-view Multi-person part is released!

- 06/10/2021: The real-time 3D visualization part is released!

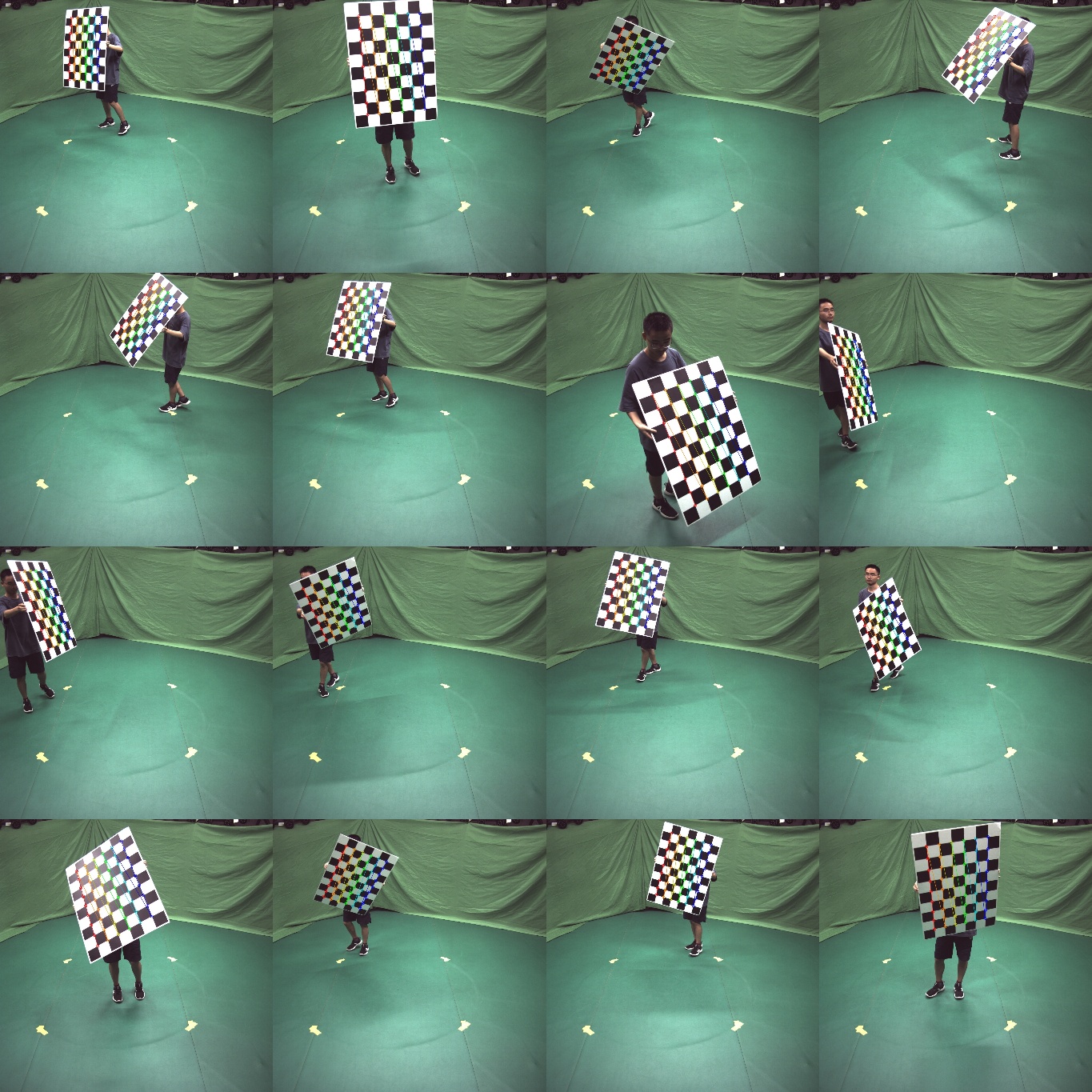

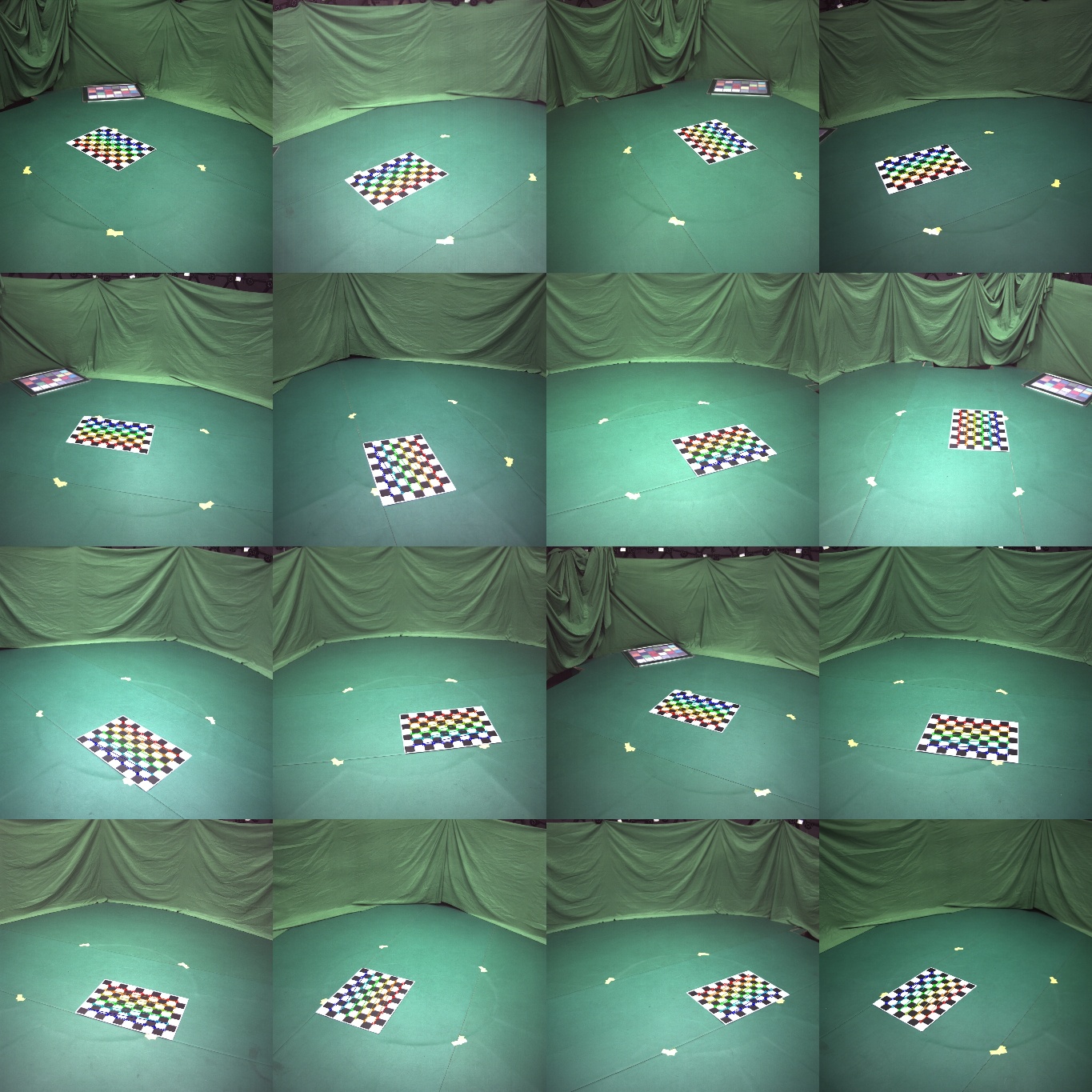

- 04/11/2021: The calibration tool and the annotator are released.

- 04/11/2021: Mirrored-Human part is released.

See doc/install for more instructions.

Here are the great works this project is built upon:

- SMPL models and layer are from MPII SMPL-X model.

- Some functions are borrowed from SPIN, VIBE, SMPLify-X

- The method for fitting 3D skeleton and SMPL model is similar to TotalCapture, without using point clouds.

- We integrate some easy-to-use functions for previous great work:

easymocap/estimator/SPIN: an SMPL estimator[5]easymocap/estimator/YOLOv4: an object detector[6](Coming soon)easymocap/estimator/HRNet: a 2D human pose estimator[7](Coming soon)

Please open an issue if you have any questions. We appreciate all contributions to improve our project.

EasyMocap is built by researchers from the 3D vision group of Zhejiang University: Qing Shuai, Qi Fang, Junting Dong, Sida Peng, Di Huang, Hujun Bao, and Xiaowei Zhou.

We would like to thank Wenduo Feng, Di Huang, Yuji Chen, Hao Xu, Qing Shuai, Qi Fang, Ting Xie, Junting Dong, Sida Peng and Xiaopeng Ji who are the performers in the sample data. We would also like to thank all the people who has helped EasyMocap in any way.

This project is a part of our work iMocap, Mirrored-Human, mvpose and Neural Body

Please consider citing these works if you find this repo is useful for your projects.

@inproceedings{dong2020motion,

title={Motion capture from internet videos},

author={Dong, Junting and Shuai, Qing and Zhang, Yuanqing and Liu, Xian and Zhou, Xiaowei and Bao, Hujun},

booktitle={European Conference on Computer Vision},

pages={210--227},

year={2020},

organization={Springer}

}

@inproceedings{peng2021neural,

title={Neural Body: Implicit Neural Representations with Structured Latent Codes for Novel View Synthesis of Dynamic Humans},

author={Peng, Sida and Zhang, Yuanqing and Xu, Yinghao and Wang, Qianqian and Shuai, Qing and Bao, Hujun and Zhou, Xiaowei},

booktitle={CVPR},

year={2021}

}

@inproceedings{fang2021mirrored,

title={Reconstructing 3D Human Pose by Watching Humans in the Mirror},

author={Fang, Qi and Shuai, Qing and Dong, Junting and Bao, Hujun and Zhou, Xiaowei},

booktitle={CVPR},

year={2021}

}

@inproceedings{dong2021fast,

title={Fast and Robust Multi-Person 3D Pose Estimation and Tracking from Multiple Views},

author={Dong, Junting and Fang, Qi and Jiang, Wen and Yang, Yurou and Bao, Hujun and Zhou, Xiaowei},

booktitle={T-PAMI},

year={2021}

}[1] Loper, Matthew, et al. "SMPL: A skinned multi-person linear model." ACM transactions on graphics (TOG) 34.6 (2015): 1-16.

[2] Romero, Javier, Dimitrios Tzionas, and Michael J. Black. "Embodied hands: Modeling and capturing hands and bodies together." ACM Transactions on Graphics (ToG) 36.6 (2017): 1-17.

[3] Pavlakos, Georgios, et al. "Expressive body capture: 3d hands, face, and body from a single image." Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2019.

Bogo, Federica, et al. "Keep it SMPL: Automatic estimation of 3D human pose and shape from a single image." European conference on computer vision. Springer, Cham, 2016.

[4] Cao, Z., Hidalgo, G., Simon, T., Wei, S.E., Sheikh, Y.: Openpose: real-time multi-person 2d pose estimation using part affinity fields. arXiv preprint arXiv:1812.08008 (2018)

[5] Kolotouros, Nikos, et al. "Learning to reconstruct 3D human pose and shape via model-fitting in the loop." Proceedings of the IEEE/CVF International Conference on Computer Vision. 2019

[6] Bochkovskiy, Alexey, Chien-Yao Wang, and Hong-Yuan Mark Liao. "Yolov4: Optimal speed and accuracy of object detection." arXiv preprint arXiv:2004.10934 (2020).

[7] Sun, Ke, et al. "Deep high-resolution representation learning for human pose estimation." Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2019.