A hypothetical music streaming startup has grown their user base and song database even more and want to move their data warehouse to a data lake. Their data resides in S3, in a directory of JSON logs on user activity on the app, as well as a directory with JSON metadata on the songs in their app.

I have built an ETL pipeline that extracts their data from S3, processes in using Spark, and loads the data back into S3 as a set of dimensional tables. This will allow their analytics team to continue finding insights in what songs their users are listening to.

In this project, I have built an ETL pipeline for a data lake hosted on S3. The data is loaded from S3, processed into analytics tables using Spark, and loaded back into S3. This Spark process is deployed on a cluster using AWS.

My database is a star schema with songplays as its fact table, and users, songs, artist, and time, as its dimension tables. A star schema is a denormalized database design which allows for simplified queries and faster aggregations on the data. This structure is ideal for OLAP operations such as rolling-up, drilling-down, slicing, and dicing. The resulting tables are saved in Parquet format.

The data used comes from two datasets that reside in S3. Here are the S3 links for each:

Song data: s3://udacity-dend/song_data

Log data: s3://udacity-dend/log_data

The first dataset is a subset of real data from the Million Song Dataset. Each file is in JSON format and contains metadata about a song and the artist of that song. The files are partitioned by the first three letters of each song's track ID. For example, here are filepaths to two files in this dataset.

song_data/A/B/C/TRABCEI128F424C983.json

song_data/A/A/B/TRAABJL12903CDCF1A.json

And below is an example of what a single song file, TRAABJL12903CDCF1A.json, looks like.

{"num_songs": 1, "artist_id": "ARJIE2Y1187B994AB7", "artist_latitude": null, "artist_longitude": null, "artist_location": "", "artist_name": "Line Renaud", "song_id": "SOUPIRU12A6D4FA1E1", "title": "Der Kleine Dompfaff", "duration": 152.92036, "year": 0}

The second dataset consists of log files in JSON format generated by this event simulator based on the songs in the dataset above. These simulate app activity logs from an imaginary music streaming app based on configuration settings.

The log files in the dataset are partitioned by year and month. For example, here are filepaths to two files in this dataset.

log_data/2018/11/2018-11-12-events.json

log_data/2018/11/2018-11-13-events.json

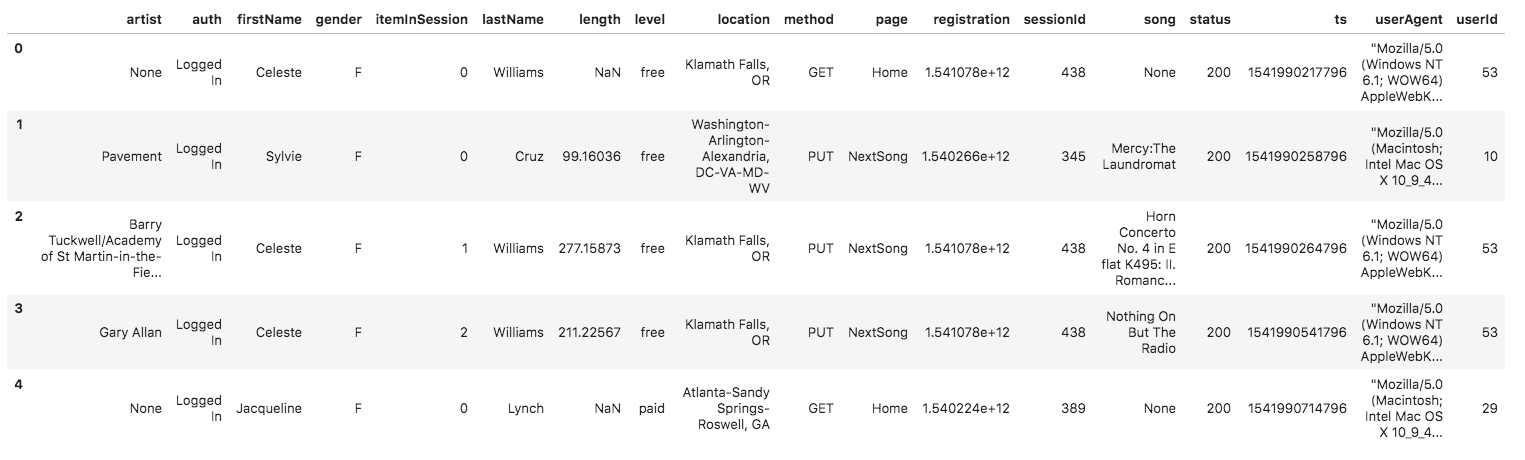

And below is an example of what the data in a log file, 2018-11-12-events.json, looks like.

dl.cfg - Contains credentials necessary for authenticating into AWS.

etl.py - ETL process for transforming raw data into analytics tables which are saved as Parquet files

In order to run this program, dl.cfg must be configured to include your AWS Access Key ID and Secret Access Key. From there, running etl.py will extract the data from S3, process it into analytics tables, and save the output as Parquet files. If desired, the output can be changed to an S3 bucket for improved performance.