The Netdata Agent provides many eBPF programs to help you troubleshoot and debug how applications interact with the Linux kernel. The ebpf.plugin uses tracepoints, trampoline, and2 kprobes to collect a wide array of high value data about the host that would otherwise be impossible to capture.

❗ eBPF monitoring only works on Linux systems and with specific Linux kernels, including all kernels newer than

4.11.0, and all kernels on CentOS 7.6 or later. For kernels older than4.11.0, improved support is in active development.

This document provides comprehensive details about the ebpf.plugin.

For hands-on configuration and troubleshooting tips see our tutorial on troubleshooting apps with eBPF metrics.

Netdata uses the following features from the Linux kernel to run eBPF programs:

- Tracepoints are hooks to call specific functions. Tracepoints are more stable than

kprobesand are preferred when both options are available. - Trampolines are bridges between kernel functions, and BPF programs. Netdata uses them by default whenever available.

- Kprobes and return probes (

kretprobe): Probes can insert virtually into any kernel instruction. When eBPF runs inentrymode, it attaches onlykprobesfor internal functions monitoring calls and some arguments every time a function is called. The user can also change configuration to usereturnmode, and this will allow users to monitor return from these functions and detect possible failures.

In each case, wherever a normal kprobe, kretprobe, or tracepoint would have run its hook function, an eBPF program is run instead, performing various collection logic before letting the kernel continue its normal control flow.

There are more methods to trigger eBPF programs, such as uprobes, but currently are not supported.

The eBPF collector is installed and enabled by default on most new installations of the Agent. If your Agent is v1.22 or older, you may to enable the collector yourself.

To enable or disable the entire eBPF collector:

-

Navigate to the Netdata config directory.

cd /etc/netdata -

Use the

edit-configscript to editnetdata.conf../edit-config netdata.conf

-

Enable the collector by scrolling down to the

[plugins]section. Uncomment the lineebpf(notebpf_process) and set it toyes.[plugins] ebpf = yes

You can configure the eBPF collector's behavior to fine-tune which metrics you receive and [optimize performance](#performance opimization).

To edit the ebpf.d.conf:

-

Navigate to the Netdata config directory.

cd /etc/netdata -

Use the

edit-configscript to editebpf.d.conf../edit-config ebpf.d.conf

You can now edit the behavior of the eBPF collector. The following sections describe each configuration option in detail.

The [global] section defines settings for the whole eBPF collector.

The collector uses two different eBPF programs. These programs rely on the same functions inside the kernel, but they monitor, process, and display different kinds of information.

By default, this plugin uses the entry mode. Changing this mode can create significant overhead on your operating

system, but also offer valuable information if you are developing or debugging software. The ebpf load mode option

accepts the following values:

entry: This is the default mode. In this mode, the eBPF collector only monitors calls for the functions described in the sections above, and does not show charts related to errors.return: In thereturnmode, the eBPF collector monitors the same kernel functions asentry, but also creates new charts for the return of these functions, such as errors. Monitoring function returns can help in debugging software, such as failing to close file descriptors or creating zombie processes.update every: Number of seconds used for eBPF to send data for Netdata.pid table size: Defines the maximum number of PIDs stored inside the application hash table.

The eBPF collector also creates charts for each running application through an integration with the

apps.plugin. This integration helps you understand how specific applications

interact with the Linux kernel.

If you want to disable the integration with apps.plugin along with the above charts, change the setting apps to

no.

[global]

apps = yes

When the integration is enabled, eBPF collector allocates memory for each process running. The total allocated memory

has direct relationship with the kernel version. When the eBPF plugin is running on kernels newer than 4.15, it uses

per-cpu maps to speed up the update of hash tables. This also implies storing data for the same PID for each processor

it runs.

The eBPF collector also creates charts for each cgroup through an integration with the

cgroups.plugin. This integration helps you understand how a specific cgroup

interacts with the Linux kernel.

The integration with cgroups.plugin is disabled by default to avoid creating overhead on your system. If you want to

enable the integration with cgroups.plugin, change the cgroups setting to yes.

[global]

cgroups = yes

If you do not need to monitor specific metrics for your cgroups, you can enable cgroups inside

ebpf.d.conf, and then disable the plugin for a specific thread by following the steps in the

Configuration section.

When an integration is enabled, your dashboard will also show the following cgroups and apps charts using low-level Linux metrics:

Note: The parenthetical accompanying each bulleted item provides the chart name.

- mem

- Number of processes killed due out of memory. (

oomkills)

- Number of processes killed due out of memory. (

- process

- Number of processes created with

do_fork. (process_create) - Number of threads created with

do_forkorclone (2), depending on your system's kernel version. (thread_create) - Number of times that a process called

do_exit. (task_exit) - Number of times that a process called

release_task. (task_close) - Number of times that an error happened to create thread or process. (

task_error)

- Number of processes created with

- swap

- Number of calls to

swap_readpage. (swap_read_call) - Number of calls to

swap_writepage. (swap_write_call)

- Number of calls to

- network

- Number of outbound connections using TCP/IPv4. (

outbound_conn_ipv4) - Number of outbound connections using TCP/IPv6. (

outbound_conn_ipv6) - Number of bytes sent. (

total_bandwidth_sent) - Number of bytes received. (

total_bandwidth_recv) - Number of calls to

tcp_sendmsg. (bandwidth_tcp_send) - Number of calls to

tcp_cleanup_rbuf. (bandwidth_tcp_recv) - Number of calls to

tcp_retransmit_skb. (bandwidth_tcp_retransmit) - Number of calls to

udp_sendmsg. (bandwidth_udp_send) - Number of calls to

udp_recvmsg. (bandwidth_udp_recv)

- Number of outbound connections using TCP/IPv4. (

- file access

- Number of calls to open files. (

file_open) - Number of calls to open files that returned errors. (

open_error) - Number of files closed. (

file_closed) - Number of calls to close files that returned errors. (

file_error_closed)

- Number of calls to open files. (

- vfs

- Number of calls to

vfs_unlink. (file_deleted) - Number of calls to

vfs_write. (vfs_write_call) - Number of calls to write a file that returned errors. (

vfs_write_error) - Number of calls to

vfs_read. (vfs_read_call) -

- Number of calls to read a file that returned errors. (

vfs_read_error)

- Number of calls to read a file that returned errors. (

- Number of bytes written with

vfs_write. (vfs_write_bytes) - Number of bytes read with

vfs_read. (vfs_read_bytes) - Number of calls to

vfs_fsync. (vfs_fsync) - Number of calls to sync file that returned errors. (

vfs_fsync_error) - Number of calls to

vfs_open. (vfs_open) - Number of calls to open file that returned errors. (

vfs_open_error) - Number of calls to

vfs_create. (vfs_create) - Number of calls to open file that returned errors. (

vfs_create_error)

- Number of calls to

- page cache

- Ratio of pages accessed. (

cachestat_ratio) - Number of modified pages ("dirty"). (

cachestat_dirties) - Number of accessed pages. (

cachestat_hits) - Number of pages brought from disk. (

cachestat_misses)

- Ratio of pages accessed. (

- directory cache

- Ratio of files available in directory cache. (

dc_hit_ratio) - Number of files accessed. (

dc_reference) - Number of files accessed that were not in cache. (

dc_not_cache) - Number of files not found. (

dc_not_found)

- Ratio of files available in directory cache. (

- ipc shm

- Number of calls to

shm_get. (shmget_call) - Number of calls to

shm_at. (shmat_call) - Number of calls to

shm_dt. (shmdt_call) - Number of calls to

shm_ctl. (shmctl_call)

- Number of calls to

The eBPF collector enables and runs the following eBPF programs by default:

fd: This eBPF program creates charts that show information about calls to open files.mount: This eBPF program creates charts that show calls to syscalls mount(2) and umount(2).shm: This eBPF program creates charts that show calls to syscalls shmget(2), shmat(2), shmdt(2) and shmctl(2).sync: Monitor calls to syscalls sync(2), fsync(2), fdatasync(2), syncfs(2), msync(2), and sync_file_range(2).network viewer: This eBPF program creates charts with information aboutTCPandUDPfunctions, including the bandwidth consumed by each.vfs: This eBPF program creates charts that show information about VFS (Virtual File System) functions.process: This eBPF program creates charts that show information about process life. When inreturnmode, it also creates charts showing errors when these operations are executed.hardirq: This eBPF program creates charts that show information about time spent servicing individual hardware interrupt requests (hard IRQs).softirq: This eBPF program creates charts that show information about time spent servicing individual software interrupt requests (soft IRQs).oomkill: This eBPF program creates a chart that shows OOM kills for all applications recognized via theapps.pluginintegration. Note that this program will show application charts regardless of whether apps integration is turned on or off.

You can also enable the following eBPF programs:

cachestat: Netdata's eBPF data collector creates charts about the memory page cache. When the integration withapps.pluginis enabled, this collector creates charts for the whole host and for each application.dcstat: This eBPF program creates charts that show information about file access using directory cache. It appendskprobesforlookup_fast()andd_lookup()to identify if files are inside directory cache, outside and files are not found.disk: This eBPF program creates charts that show information about disk latency independent of filesystem.filesystem: This eBPF program creates charts that show information about some filesystem latency.swap: This eBPF program creates charts that show information about swap access.mdflush: This eBPF program creates charts that show information about multi-device software flushes.

You can configure each thread of the eBPF data collector. This allows you to overwrite global options defined in /etc/netdata/ebpf.d.conf and configure specific options for each thread.

To configure an eBPF thread:

-

Navigate to the Netdata config directory.

cd /etc/netdata -

Use the

edit-configscript to edit a thread configuration file. The following configuration files are available:-

network.conf: Configuration for thenetworkthread. This config file overwrites the global options and also lets you specify which network the eBPF collector monitors. -

process.conf: Configuration for theprocessthread. -

cachestat.conf: Configuration for thecachestatthread(#filesystem-configuration). -

dcstat.conf: Configuration for thedcstatthread. -

disk.conf: Configuration for thediskthread. -

fd.conf: Configuration for thefile descriptorthread. -

filesystem.conf: Configuration for thefilesystemthread. -

hardirq.conf: Configuration for thehardirqthread. -

softirq.conf: Configuration for thesoftirqthread. -

sync.conf: Configuration for thesyncthread. -

vfs.conf: Configuration for thevfsthread../edit-config FILE.conf

-

The network configuration has specific options to configure which network(s) the eBPF collector monitors. These options are divided in the following sections:

You can configure the information shown on outbound and inbound charts with the settings in this section.

[network connections]

maximum dimensions = 500

resolve hostname ips = no

ports = 1-1024 !145 !domain

hostnames = !example.com

ips = !127.0.0.1/8 10.0.0.0/8 172.16.0.0/12 192.168.0.0/16 fc00::/7

When you define a ports setting, Netdata will collect network metrics for that specific port. For example, if you

write ports = 19999, Netdata will collect only connections for itself. The hostnames setting accepts

simple patterns. The ports, and ips settings accept negation (!) to deny

specific values or asterisk alone to define all values.

In the above example, Netdata will collect metrics for all ports between 1 and 443, with the exception of 53 (domain) and 145.

The following options are available:

ports: Define the destination ports for Netdata to monitor.hostnames: The list of hostnames that can be resolved to an IP address.ips: The IP or range of IPs that you want to monitor. You can use IPv4 or IPv6 addresses, use dashes to define a range of IPs, or use CIDR values. By default, only data for private IP addresses is collected, but this can be changed with theipssetting.

By default, Netdata displays up to 500 dimensions on network connection charts. If there are more possible dimensions,

they will be bundled into the other dimension. You can increase the number of shown dimensions by changing

the maximum dimensions setting.

The dimensions for the traffic charts are created using the destination IPs of the sockets by default. This can be

changed setting resolve hostname ips = yes and restarting Netdata, after this Netdata will create dimensions using

the hostnames every time that is possible to resolve IPs to their hostnames.

Netdata uses the list of services in /etc/services to plot network connection charts. If this file does not contain

the name for a particular service you use in your infrastructure, you will need to add it to the [service name]

section.

For example, Netdata's default port (19999) is not listed in /etc/services. To associate that port with the Netdata

service in network connection charts, and thus see the name of the service instead of its port, define it:

[service name]

19999 = Netdata

The sync configuration has specific options to disable monitoring for syscalls. All syscalls are monitored by default.

[syscalls]

sync = yes

msync = yes

fsync = yes

fdatasync = yes

syncfs = yes

sync_file_range = yes

The filesystem configuration has specific options to disable monitoring for filesystems; by default, all filesystems are monitored.

[filesystem]

btrfsdist = yes

ext4dist = yes

nfsdist = yes

xfsdist = yes

zfsdist = yes

The ebpf program nfsdist monitors only nfs mount points.

If the eBPF collector does not work, you can troubleshoot it by running the ebpf.plugin command and investigating its

output.

cd /usr/libexec/netdata/plugins.d/

sudo su -s /bin/bash ./ebpf.pluginYou can also use grep to search the Agent's error.log for messages related to eBPF monitoring.

grep -i ebpf /var/log/netdata/error.logThe eBPF collector only works on Linux systems and with specific Linux kernels. We support all kernels more recent than

4.11.0, and all kernels on CentOS 7.6 or later.

You can run our helper script to determine whether your system can support eBPF monitoring. If it returns no output, your system is ready to compile and run the eBPF collector.

curl -sSL https://raw.githubusercontent.com/netdata/kernel-collector/master/tools/check-kernel-config.sh | sudo bashIf you see a warning about a missing kernel

configuration (KPROBES KPROBES_ON_FTRACE HAVE_KPROBES BPF BPF_SYSCALL BPF_JIT), you will need to recompile your kernel

to support this configuration. The process of recompiling Linux kernels varies based on your distribution and version.

Read the documentation for your system's distribution to learn more about the specific workflow for recompiling the

kernel, ensuring that you set all the necessary

The eBPF collector also requires both the tracefs and debugfs filesystems. Try mounting the tracefs and debugfs

filesystems using the commands below:

sudo mount -t debugfs nodev /sys/kernel/debug

sudo mount -t tracefs nodev /sys/kernel/tracingIf they are already mounted, you will see an error. You can also configure your system's /etc/fstab configuration to

mount these filesystems on startup. More information can be found in

the ftrace documentation.

The eBPF collector creates charts on different menus, like System Overview, Memory, MD arrays, Disks, Filesystem, Mount Points, Networking Stack, systemd Services, and Applications.

The collector stores the actual value inside of its process, but charts only show the difference between the values collected in the previous and current seconds.

Not all charts within the System Overview menu are enabled by default. Charts that rely on kprobes are disabled by default because they add around 100ns overhead for each function call. This is a small number from a human's perspective, but the functions are called many times and create an impact

on host. See the configuration section for details about how to enable them.

Internally, the Linux kernel treats both processes and threads as tasks. To create a thread, the kernel offers a few

system calls: fork(2), vfork(2), and clone(2). To generate this chart, the eBPF

collector uses the following tracepoints and kprobe:

sched/sched_process_fork: Tracepoint called after a call forfork (2),vfork (2)andclone (2).sched/sched_process_exec: Tracepoint called after a exec-family syscall.kprobe/kernel_clone: This is the mainfork()routine since kernel5.10.0was released.kprobe/_do_fork: Likekernel_clone, but this was the main function between kernels4.2.0and5.9.16kprobe/do_fork: This was the main function before kernel4.2.0.

Ending a task requires two steps. The first is a call to the internal function do_exit, which notifies the operating

system that the task is finishing its work. The second step is to release the kernel information with the internal

function release_task. The difference between the two dimensions can help you discover

zombie processes. To get the metrics, the collector uses:

sched/sched_process_exit: Tracepoint called after a task exits.kprobe/release_task: This function is called when a process exits, as the kernel still needs to remove the process descriptor.

The functions responsible for ending tasks do not return values, so this chart contains information about failures on process and thread creation only.

Inside the swap submenu the eBPF plugin creates the chart swapcalls; this chart is displaying when processes are

calling functions swap_readpage and swap_writepage,

which are functions responsible for doing IO in swap memory. To collect the exact moment that an access to swap happens,

the collector attaches kprobes for cited functions.

The following tracepoints are used to measure time usage for soft IRQs:

irq/softirq_entry: Called before softirq handlerirq/softirq_exit: Called when softirq handler returns.

The following tracepoints are used to measure the latency of servicing a hardware interrupt request (hard IRQ).

irq/irq_handler_entry: Called immediately before the IRQ action handler.irq/irq_handler_exit: Called immediately after the IRQ action handler returns.irq_vectors: These are traces fromirq_handler_entryandirq_handler_exitwhen an IRQ is handled. The following elements from vector are triggered:irq_vectors/local_timer_entryirq_vectors/local_timer_exitirq_vectors/reschedule_entryirq_vectors/reschedule_exitirq_vectors/call_function_entryirq_vectors/call_function_exitirq_vectors/call_function_single_entryirq_vectors/call_function_single_xitirq_vectors/irq_work_entryirq_vectors/irq_work_exitirq_vectors/error_apic_entryirq_vectors/error_apic_exitirq_vectors/thermal_apic_entryirq_vectors/thermal_apic_exitirq_vectors/threshold_apic_entryirq_vectors/threshold_apic_exitirq_vectors/deferred_error_entryirq_vectors/deferred_error_exitirq_vectors/spurious_apic_entryirq_vectors/spurious_apic_exitirq_vectors/x86_platform_ipi_entryirq_vectors/x86_platform_ipi_exit

To monitor shared memory system call counts, Netdata attaches tracing in the following functions:

shmget: Runs whenshmgetis called.shmat: Runs whenshmatis called.shmdt: Runs whenshmdtis called.shmctl: Runs whenshmctlis called.

In the memory submenu the eBPF plugin creates two submenus page cache and synchronization with the following organization:

- Page Cache

- Page cache ratio

- Dirty pages

- Page cache hits

- Page cache misses

- Synchronization

- File sync

- Memory map sync

- File system sync

- File range sync

When the processor needs to read or write a location in main memory, it checks for a corresponding entry in the page cache. If the entry is there, a page cache hit has occurred and the read is from the cache.

A page cache hit is when the page cache is successfully accessed with a read operation. We do not count pages that were added relatively recently.

A "dirty page" is a page in the page cache that was modified after being created. Since non-dirty pages in the page cache have identical copies in secondary storage (e.g. hard disk drive or solid-state drive), discarding and reusing their space is much quicker than paging out application memory, and is often preferred over flushing the dirty pages into secondary storage and reusing their space.

On cachestat_dirties Netdata demonstrates the number of pages that were modified. This chart shows the number of calls

to the function mark_buffer_dirty.

When the processor needs to read or write in a specific memory address, it checks for a corresponding entry in the page cache.

If the processor hits a page cache (page cache hit), it reads the entry from the cache. If there is no entry (page cache miss),

the kernel allocates a new entry and copies data from the disk. Netdata calculates the percentage of accessed files that are cached on

memory. The ratio is calculated counting the accessed cached pages

(without counting dirty pages and pages added because of read misses) divided by total access without dirty pages.

______Number of accessed cached pages**__**

Number of total accessed pages - dirty pages - missed pages

The chart cachestat_ratio shows how processes are accessing page cache. In a normal scenario, we expect values around

100%, which means that the majority of the work on the machine is processed in memory. To calculate the ratio, Netdata

attaches kprobes for kernel functions:

add_to_page_cache_lru: Page addition.mark_page_accessed: Access to cache.account_page_dirtied: Dirty (modified) pages.mark_buffer_dirty: Writes to page cache.

A page cache miss means that a page was not inside memory when the process tried to access it. This chart shows the

result of the difference for calls between functions add_to_page_cache_lru and account_page_dirtied.

This chart shows calls to synchronization methods, fsync(2)

and fdatasync(2), to transfer all modified page caches

for the files on disk devices. These calls block until the disk reports that the transfer has been completed. They flush

data for specific file descriptors.

The chart shows calls to msync(2) syscalls. This syscall flushes

changes to a file that was mapped into memory using mmap(2).

This chart monitors calls demonstrating commits from filesystem caches to disk. Netdata attaches tracing for

sync(2), and syncfs(2).

This chart shows calls to sync_file_range(2) which

synchronizes file segments with disk.

Note: This is the most dangerous syscall to synchronize data, according to its manual.

The eBPF plugin shows multi-device flushes happening in real time. This can be used to explain some spikes happening in disk latency charts.

By default, MD flush is disabled. To enable it, configure your

/etc/netdata/ebpf.d.conf file as:

[global]

mdflush = yes

To collect data related to Linux multi-device (MD) flushing, the following kprobe is used:

kprobe/md_flush_request: called whenever a request for flushing multi-device data is made.

The eBPF plugin also shows a chart in the Disk section when the disk thread is enabled.

This will create the chart disk_latency_io for each disk on the host. The following tracepoints are used:

block/block_rq_issue: IO request operation to a device drive.block/block_rq_complete: IO operation completed by device.

Disk Latency is the single most important metric to focus on when it comes to storage performance, under most circumstances. For hard drives, an average latency somewhere between 10 to 20 ms can be considered acceptable. For SSD (Solid State Drives), in most cases, workloads experience less than 1 ms latency numbers, but workloads should never reach higher than 3 ms. The dimensions refer to time intervals.

This group has charts demonstrating how applications interact with the Linux kernel to open and close file descriptors. It also brings latency charts for several different filesystems.

We calculate the difference between the calling and return times, spanning disk I/O, file system operations (lock, I/O), run queue latency and all events related to the monitored action.

To measure the latency of executing some actions in an

ext4 filesystem, the

collector needs to attach kprobes and kretprobes for each of the following

functions:

ext4_file_read_iter: Function used to measure read latency.ext4_file_write_iter: Function used to measure write latency.ext4_file_open: Function used to measure open latency.ext4_sync_file: Function used to measure sync latency.

To measure the latency of executing some actions in a zfs filesystem, the

collector needs to attach kprobes and kretprobes for each of the following

functions:

zpl_iter_read: Function used to measure read latency.zpl_iter_write: Function used to measure write latency.zpl_open: Function used to measure open latency.zpl_fsync: Function used to measure sync latency.

To measure the latency of executing some actions in an

xfs filesystem, the

collector needs to attach kprobes and kretprobes for each of the following

functions:

xfs_file_read_iter: Function used to measure read latency.xfs_file_write_iter: Function used to measure write latency.xfs_file_open: Function used to measure open latency.xfs_file_fsync: Function used to measure sync latency.

To measure the latency of executing some actions in an

nfs filesystem, the

collector needs to attach kprobes and kretprobes for each of the following

functions:

nfs_file_read: Function used to measure read latency.nfs_file_write: Function used to measure write latency.nfs_file_open: Functions used to measure open latency.nfs4_file_open: Functions used to measure open latency for NFS v4.nfs_getattr: Function used to measure sync latency.

To measure the latency of executing some actions in a btrfs

filesystem, the collector needs to attach kprobes and kretprobes for each of the following functions:

Note: We are listing two functions used to measure

readlatency, but we use eitherbtrfs_file_read_iterorgeneric_file_read_iter, depending on kernel version.

btrfs_file_read_iter: Function used to measure read latency since kernel5.10.0.generic_file_read_iter: Likebtrfs_file_read_iter, but this function was used before kernel5.10.0.btrfs_file_write_iter: Function used to write data.btrfs_file_open: Function used to open files.btrfs_sync_file: Function used to synchronize data to filesystem.

To give metrics related to open and close events, instead of attaching kprobes for each syscall used to do these

events, the collector attaches kprobes for the common function used for syscalls:

do_sys_open: Internal function used to open files.do_sys_openat2: Function called fromdo_sys_opensince version5.6.0.close_fd: Function used to close file descriptor since kernel5.11.0.__close_fd: Function used to close files before version5.11.0.

This chart shows the number of times some software tried and failed to open or close a file descriptor.

The Linux Virtual File System (VFS) is an abstraction layer on top of a

concrete filesystem like the ones listed in the parent section, e.g. ext4.

In this section we list the mechanism by which we gather VFS data, and what charts are consequently created.

To measure the latency and total quantity of executing some VFS-level functions, ebpf.plugin needs to attach kprobes and kretprobes for each of the following functions:

vfs_write: Function used monitoring the number of successful & failed filesystem write calls, as well as the total number of written bytes.vfs_writev: Same function asvfs_writebut for vector writes (i.e. a single write operation using a group of buffers rather than 1).vfs_read: Function used for monitoring the number of successful & failed filesystem read calls, as well as the total number of read bytes.vfs_readvSame function asvfs_readbut for vector reads (i.e. a single read operation using a group of buffers rather than 1).vfs_unlink: Function used for monitoring the number of successful & failed filesystem unlink calls.vfs_fsync: Function used for monitoring the number of successful & failed filesystem fsync calls.vfs_open: Function used for monitoring the number of successful & failed filesystem open calls.vfs_create: Function used for monitoring the number of successful & failed filesystem create calls.

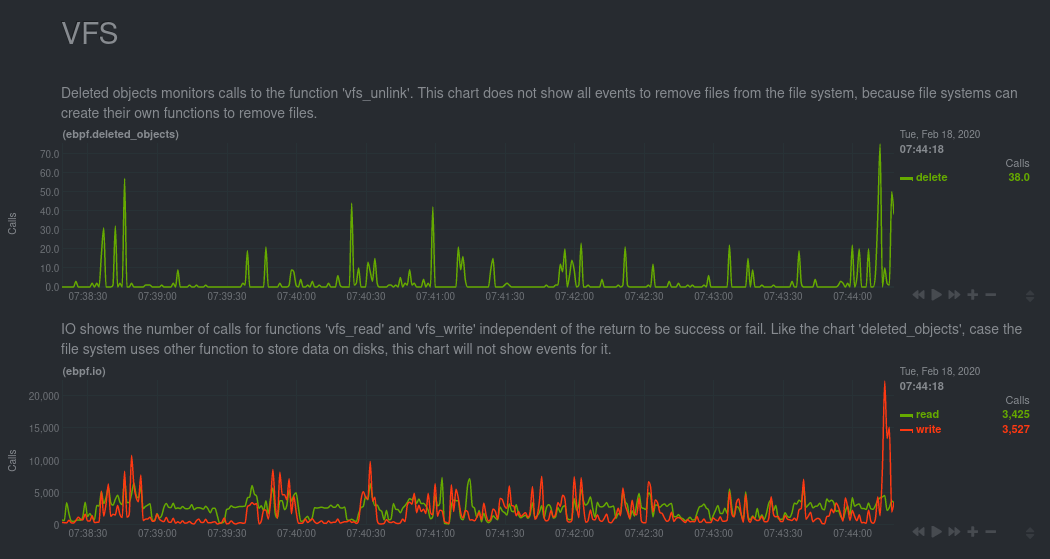

This chart monitors calls to vfs_unlink. This function is responsible for removing objects from the file system.

This chart shows the number of calls to the functions vfs_read and vfs_write.

This chart also monitors vfs_read and vfs_write but, instead of the number of calls, it shows the total amount of

bytes read and written with these functions.

The Agent displays the number of bytes written as negative because they are moving down to disk.

The Agent counts and shows the number of instances where a running program experiences a read or write error.

This chart shows the number of calls to vfs_create. This function is responsible for creating files.

This chart shows the number of calls to vfs_fsync. This function is responsible for calling fsync(2) or

fdatasync(2) on a file. You can see more details in the Synchronization section.

This chart shows the number of calls to vfs_open. This function is responsible for opening files.

Metrics for directory cache are collected using kprobe for lookup_fast, because we are interested in the number of

times this function is accessed. On the other hand, for d_lookup we are not only interested in the number of times it

is accessed, but also in possible errors, so we need to attach a kretprobe. For this reason, the following is used:

lookup_fast: Called to look at data inside the directory cache.d_lookup: Called when the desired file is not inside the directory cache.

When directory cache is showing 100% that means that every accessed file was present in the directory cache. If files are not present in the directory cache, they are either not present in the file system or the files were not accessed before.

The following tracing are used to collect mount & unmount call counts:

Netdata monitors socket bandwidth attaching tracing for internal functions.

This chart demonstrates calls to tcp_v4_connection and tcp_v6_connection that start connections for IPV4 and IPV6, respectively.

This chart demonstrates TCP and UDP connections that the host receives.

To collect this information, netdata attaches a tracing to inet_csk_accept.

This chart demonstrates calls to functions tcp_sendmsg, tcp_cleanup_rbuf, and tcp_close; these functions are used

to send & receive data and to close connections when TCP protocol is used.

This chart demonstrates calls to functions:

tcp_sendmsg: Function responsible to send data for a specified destination.tcp_cleanup_rbuf: We use this function instead oftcp_recvmsg, because the last one missestcp_read_socktraffic and we would also need to add moretracingto get the socket and package size.tcp_close: Function responsible to close connection.

This chart demonstrates calls to function tcp_retransmit that is responsible for executing TCP retransmission when the

receiver did not return the packet during the expected time.

This chart demonstrates calls to functions udp_sendmsg and udp_recvmsg, which are responsible for sending &

receiving data for connections when the UDP protocol is used.

Like the previous chart, this one also monitors udp_sendmsg and udp_recvmsg, but instead of showing the number of

calls, it monitors the number of bytes sent and received.

These are tracepoints related to OOM killing processes.

oom/mark_victim: Monitors when an oomkill event happens.

eBPF monitoring is complex and produces a large volume of metrics. We've discovered scenarios where the eBPF plugin significantly increases kernel memory usage by several hundred MB.

If your node is experiencing high memory usage and there is no obvious culprit to be found in the apps.mem chart,

consider testing for high kernel memory usage by disabling eBPF monitoring. Next,

restart Netdata with sudo systemctl restart netdata to see if system memory

usage (see the system.ram chart) has dropped significantly.

Beginning with v1.31, kernel memory usage is configurable via the pid table size setting

in ebpf.conf.

When SELinux is enabled, it may prevent ebpf.plugin from

starting correctly. Check the Agent's error.log file for errors like the ones below:

2020-06-14 15:32:08: ebpf.plugin ERROR : EBPF PROCESS : Cannot load program: /usr/libexec/netdata/plugins.d/pnetdata_ebpf_process.3.10.0.o (errno 13, Permission denied)

2020-06-14 15:32:19: netdata ERROR : PLUGINSD[ebpf] : read failed: end of file (errno 9, Bad file descriptor)You can also check for errors related to ebpf.plugin inside /var/log/audit/audit.log:

type=AVC msg=audit(1586260134.952:97): avc: denied { map_create } for pid=1387 comm="ebpf.pl" scontext=system_u:system_r:unconfined_service_t:s0 tcontext=system_u:system_r:unconfined_service_t:s0 tclass=bpf permissive=0

type=SYSCALL msg=audit(1586260134.952:97): arch=c000003e syscall=321 success=no exit=-13 a0=0 a1=7ffe6b36f000 a2=70 a3=0 items=0 ppid=1135 pid=1387 auid=4294967295 uid=994 gid=990 euid=0 suid=0 fsuid=0 egid=990 sgid=990 fsgid=990 tty=(none) ses=4294967295 comm="ebpf_proc

ess.pl" exe="/usr/libexec/netdata/plugins.d/ebpf.plugin" subj=system_u:system_r:unconfined_service_t:s0 key=(null)If you see similar errors, you will have to adjust SELinux's policies to enable the eBPF collector.

To enable ebpf.plugin to run on a distribution with SELinux enabled, it will be necessary to take the following

actions.

First, stop the Netdata Agent.

# systemctl stop netdataNext, create a policy with the audit.log file you examined earlier.

# grep ebpf.plugin /var/log/audit/audit.log | audit2allow -M netdata_ebpfThis will create two new files: netdata_ebpf.te and netdata_ebpf.mod.

Edit the netdata_ebpf.te file to change the options class and allow. You should have the following at the end of

the netdata_ebpf.te file.

module netdata_ebpf 1.0;

require {

type unconfined_service_t;

class bpf { map_create map_read map_write prog_load prog_run };

}

#============= unconfined_service_t ==============

allow unconfined_service_t self:bpf { map_create map_read map_write prog_load prog_run };

Then compile your netdata_ebpf.te file with the following commands to create a binary that loads the new policies:

# checkmodule -M -m -o netdata_ebpf.mod netdata_ebpf.te

# semodule_package -o netdata_ebpf.pp -m netdata_ebpf.modFinally, you can load the new policy and start the Netdata agent again:

# semodule -i netdata_ebpf.pp

# systemctl start netdataBeginning with version 5.4, the Linux kernel has

a feature called "lockdown," which may affect ebpf.plugin depending how the kernel was compiled. The following table

shows how the lockdown module impacts ebpf.plugin based on the selected options:

| Enforcing kernel lockdown | Enable lockdown LSM early in init | Default lockdown mode | Can ebpf.plugin run with this? |

|---|---|---|---|

| YES | NO | NO | YES |

| YES | Yes | None | YES |

| YES | Yes | Integrity | YES |

| YES | Yes | Confidentiality | NO |

If you or your distribution compiled the kernel with the last combination, your system cannot load shared libraries

required to run ebpf.plugin.