Not found

-Oops! This page doesn't exist. Try going back to our home page.

- -You can learn how to make a 404 page like this in Custom 404 Pages.

- -

-  -

-  -

-  -

-Kairos creates self-coordinated, fully meshed clusters at the edge by using a combination of P2P technology, VPN, and Kubernetes.

-

-This design is made up of several components:

-

-- The Kairos base OS with support for different distribution flavors and k3s combinations (see our support matrix [here](/docs/reference/image_matrix)).

-- A Virtual private network interface ([EdgeVPN](https://github.com/mudler/edgevpn) which leverages [libp2p](https://github.com/libp2p/go-libp2p)).

-- K3s/CNI configured to work with the VPN interface.

-- A shared ledger accessible to all nodes in the p2p private network.

-

-By using libp2p as the transport layer, Kairos can abstract connections between the nodes and use it as a coordination mechanism. The shared ledger serves as a cache to store additional data, such as node tokens to join nodes to the cluster or the cluster topology, and is accessible to all nodes in the P2P private network. The VPN interface is automatically configured and self-coordinated, requiring zero-configuration and no user intervention.

-

-Moreover, any application at the OS level can use P2P functionalities by using Virtual IPs within the VPN. The user only needs to provide a generated shared token containing OTP seeds for rendezvous points used during connection bootstrapping between the peers. It's worth noting that the VPN is optional, and the shared ledger can be used to coordinate and set up other forms of networking between the cluster nodes, such as KubeVIP. (See this example [here](/docs/examples/multi-node-p2p-ha-kubevip))

-

-## Implementation

-

-Peer-to-peer (P2P) networking is used to coordinate and bootstrap nodes. When this functionality is enabled, there is a distributed ledger accessible over the nodes that can be programmatically accessed and used to store metadata.

-

-Kairos can automatically set up a VPN between nodes using a shared secret. This enables the nodes to automatically coordinate, discover, configure, and establish a network overlay spanning across multiple regions. [EdgeVPN](https://github.com/mudler/edgevpn) is used for this purpose.

-

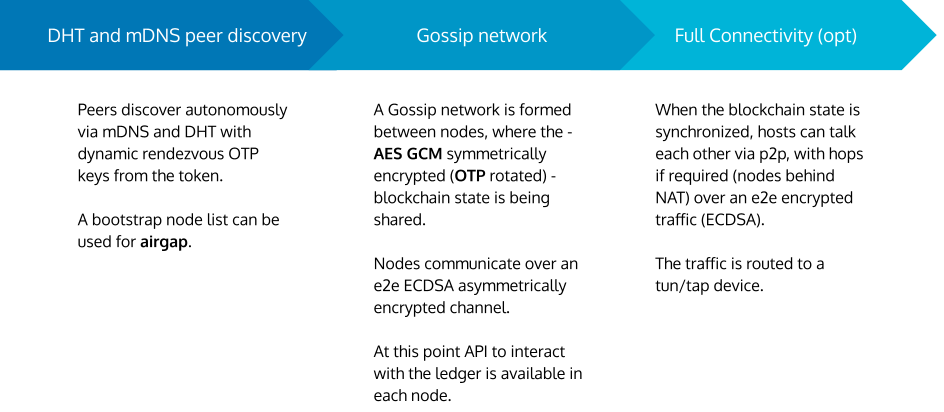

-The private network is bootstrapped in three phases, with discovery driven by a distributed hash table (DHT) and multicast DNS (mDNS), which can be selectively disabled or enabled. The three phases are:

-

-1. Discovery

-1. Gossip network

-1. Full connectivity

-

-During the discovery phase, which can occur via mDNS (for LAN) or DHT (for WAN), nodes discover each other by broadcasting their presence to the network.

-

-In the second phase, rendezvous points are rotated by OTP (one-time password). A shared token containing OTP seeds is used to generate these rendezvous points, which serve as a secure way to bootstrap connections between nodes. This is essential for establishing a secure and self-coordinated P2P network.

-

-In the third phase, a gossip network is formed among nodes, which shares shared ledger blocks symmetrically encrypted with AES. The key used to encrypt these blocks is rotated via OTP. This ensures that the shared ledger is secure and that each node has access to the most up-to-date version of the shared configuration. The ledger is used to store arbitrary metadata from the nodes of the network. On each update, a new block is created with the new information and propagated via gossip.

-

-Optionally, full connectivity can be established by bringing up a TUN interface, which routes packets via the libp2p network. This enables any application at the OS level to leverage P2P functionalities by using VirtualIPs accessible within the VPN.

-

-The coordination process in Kairos is designed to be resilient and self-coordinated, with no need for complex network configurations or control management interfaces. By using this approach, Kairos simplifies the process of deploying and managing Kubernetes clusters at the edge, making it easy for users to focus on running and scaling their applications.

-

-

-

-Kairos creates self-coordinated, fully meshed clusters at the edge by using a combination of P2P technology, VPN, and Kubernetes.

-

-This design is made up of several components:

-

-- The Kairos base OS with support for different distribution flavors and k3s combinations (see our support matrix [here](/docs/reference/image_matrix)).

-- A Virtual private network interface ([EdgeVPN](https://github.com/mudler/edgevpn) which leverages [libp2p](https://github.com/libp2p/go-libp2p)).

-- K3s/CNI configured to work with the VPN interface.

-- A shared ledger accessible to all nodes in the p2p private network.

-

-By using libp2p as the transport layer, Kairos can abstract connections between the nodes and use it as a coordination mechanism. The shared ledger serves as a cache to store additional data, such as node tokens to join nodes to the cluster or the cluster topology, and is accessible to all nodes in the P2P private network. The VPN interface is automatically configured and self-coordinated, requiring zero-configuration and no user intervention.

-

-Moreover, any application at the OS level can use P2P functionalities by using Virtual IPs within the VPN. The user only needs to provide a generated shared token containing OTP seeds for rendezvous points used during connection bootstrapping between the peers. It's worth noting that the VPN is optional, and the shared ledger can be used to coordinate and set up other forms of networking between the cluster nodes, such as KubeVIP. (See this example [here](/docs/examples/multi-node-p2p-ha-kubevip))

-

-## Implementation

-

-Peer-to-peer (P2P) networking is used to coordinate and bootstrap nodes. When this functionality is enabled, there is a distributed ledger accessible over the nodes that can be programmatically accessed and used to store metadata.

-

-Kairos can automatically set up a VPN between nodes using a shared secret. This enables the nodes to automatically coordinate, discover, configure, and establish a network overlay spanning across multiple regions. [EdgeVPN](https://github.com/mudler/edgevpn) is used for this purpose.

-

-The private network is bootstrapped in three phases, with discovery driven by a distributed hash table (DHT) and multicast DNS (mDNS), which can be selectively disabled or enabled. The three phases are:

-

-1. Discovery

-1. Gossip network

-1. Full connectivity

-

-During the discovery phase, which can occur via mDNS (for LAN) or DHT (for WAN), nodes discover each other by broadcasting their presence to the network.

-

-In the second phase, rendezvous points are rotated by OTP (one-time password). A shared token containing OTP seeds is used to generate these rendezvous points, which serve as a secure way to bootstrap connections between nodes. This is essential for establishing a secure and self-coordinated P2P network.

-

-In the third phase, a gossip network is formed among nodes, which shares shared ledger blocks symmetrically encrypted with AES. The key used to encrypt these blocks is rotated via OTP. This ensures that the shared ledger is secure and that each node has access to the most up-to-date version of the shared configuration. The ledger is used to store arbitrary metadata from the nodes of the network. On each update, a new block is created with the new information and propagated via gossip.

-

-Optionally, full connectivity can be established by bringing up a TUN interface, which routes packets via the libp2p network. This enables any application at the OS level to leverage P2P functionalities by using VirtualIPs accessible within the VPN.

-

-The coordination process in Kairos is designed to be resilient and self-coordinated, with no need for complex network configurations or control management interfaces. By using this approach, Kairos simplifies the process of deploying and managing Kubernetes clusters at the edge, making it easy for users to focus on running and scaling their applications.

-

-

- -

-

- -

-

- -

-

Oops! This page doesn't exist. Try going back to our home page.

- -You can learn how to make a 404 page like this in Custom 404 Pages.

-

We call Kairos a meta-Linux Distribution. Why meta? Because it sits as a container layer, turning any Linux distro into an immutable system distributed via container registries. With Kairos, the OS is the container image, which is used for new installations and upgrades.

The Kairos 'factory' enables you to build custom bootable-OS images for your edge devices, from your choice of OS (including openSUSE, Alpine and Ubuntu), and your choice of edge Kubernetes distribution—Kairos is totally agnostic.

Each node boots from the same image, so no more snowflakes in your clusters, and each system is immutable—it boots in a restricted, permissionless mode, where certain paths are not writeable. For instance, after an installation it's not possible to install additional packages in the system, and any configuration change is discarded after a reboot. This dramatically reduces the attack surface and the impact of malicious actors gaining access to the device.

Keeping simplicity while providing complex solutions is a key factor of Kairos. Onboarding of nodes can be done via QR code, manually, remotely via SSH, interactively, or completely automated with Kubernetes, with zero touch provisioning.

Kairos optionally supports P2P full-mesh out of the box. New devices wake up with a shared secret and distributed ledger of other nodes and clusters to look for—they form a unified overlay network that’s E2E encrypted to discover other devices, even spanning multiple networks, to bootstrap the cluster.

Each Kairos OS is created as easily as writing a Dockerfile—no custom recipes or arcane languages here. You can run and customize the container images locally with Docker, Podman, or your container engine of choice exactly how you do for apps already.

Your built OS is a container-based, single image that is distributed via container registries, so it plugs neatly into your existing CI/CD pipelines. It makes edge scale as repeatable and portable as driving containers. Customizing, mirroring of images, scanning vulnerabilities, gating upgrades, patching CVEs are some of the endless possibilities. Updating nodes is just as easy as selecting a new version via Kubernetes. Each node will pull the update from your repo, installing on A/B partitions for zero-risk upgrades with failover.

Use Kubernetes management principles to manage and provision your clusters. Kairos supports automatic node provisioning via CRDs; upgrade management via Kubernetes; node repurposing and machine auto scaling capabilities; and complete configuration management via cloud-init.

Kairos draws on the strength of the cloud-native ecosystem, not just for principles and approaches, but components. Cluster API is optionally supported as well, and can be used to manage Kubernetes clusters using native Kubernetes APIs with zero touch provisioning.

We move fast, but we try not to break stuff—particularly your nodes. Every change in the Kairos codebase runs through highly engineered automated testing before release to catch bugs earlier.

While Kairos has been engineered for large-scale use by DevOps and IT Engineering teams working in cloud, bare metal, edge and embedded systems environments, we welcome makers, hobbyists, and anyone in the community to participate in driving forward our vision of the immutable, decentralized, and containerized edge.

Kairos is a vibrant, active project with time and financial backing from Spectro Cloud, a Kubernetes management platform provider with a strong commitment to the open source community. It is a silver member of the CNCF and LF Edge, a Certified Kubernetes Service Provider, and a contributor to projects such as Cluster API. Find more about Spectro Cloud here.